Quasar Scan user guide

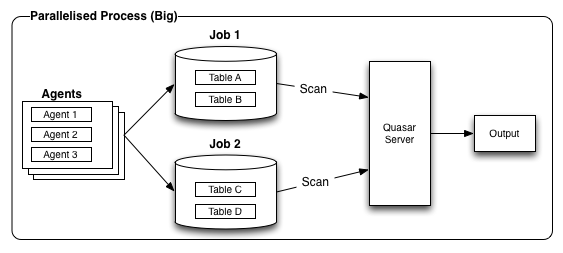

Parallelised Scans (Big)

Big involves one or more agents, usually running multiple jobs at once, all related to the same target and splitting the input space. This is usually done through whitelisting files, tables, etc.

In order to conduct a large job effectively and to figure out how to split the input space, it will require consideration of whether you can run the scans during the day, how long scans can be run for, and how powerful the server is (e.g., can it handle multiple Quasar scanning working their way through it without disruption).

We recommend completing a series of pilot scan with the target to determine the speed of the scan and what you can do before you begin to affect the overall performance of the server. This varies widely between organisations, environments, networks, and target types. We are unable to provide a one-size fits all solution in the guide or in the shipped product. Below are some broad examples of how this can be approached. Quasar is available for consultation on tailoring the product to your specific environment.

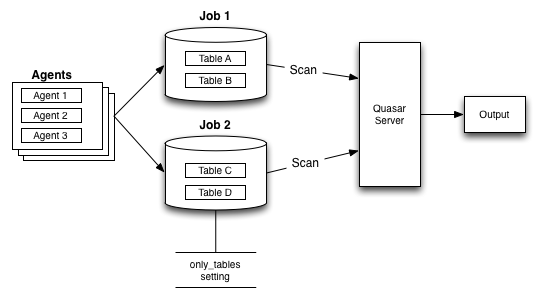

Example: Big Database

Workflow

- Configure the agent(s) with the connection information

- Run multiple jobs on sets of tables

Recommended settings to use

only_tables

Description:

Default Value:

Notes: topnrows

Description: This setting should be used when you want to set a balance between the need to scan everything and the need to cap the scan time. If the value is left unconfigured, the result is that scans could take an unbounded amount of time when used on large tables.

Default Value: 999999

Units: Number of rows to scan

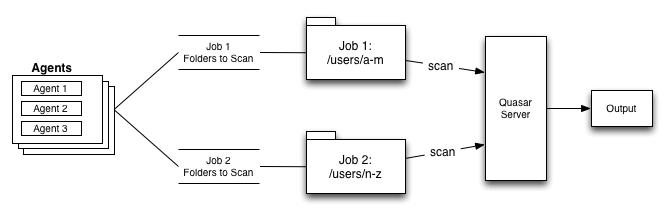

Example: Big SAN / Fileserver

Workflow

- Locate agent(s) close by where possible

- Split lists of folders, run multiple jobs

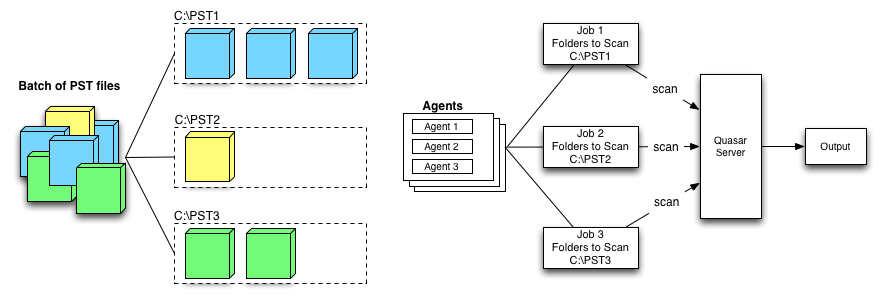

Example: PST File Collection

Workflow

- Make N folders

- Put a batch of PSTs into each folder

- Create multiple jobs, one for each folder